Grafana, a leading open-source analytics and monitoring platform, has urgently patched a critical security vulnerability within its experimental AI plugin. The flaw, if exploited, could have allowed attackers to manipulate the AI to exfiltrate sensitive user data, including dashboard configurations and database queries, to an external server under their control. This incident highlights a novel and concerning attack vector where artificial intelligence systems become conduits for data theft through a technique known as indirect prompt injection.

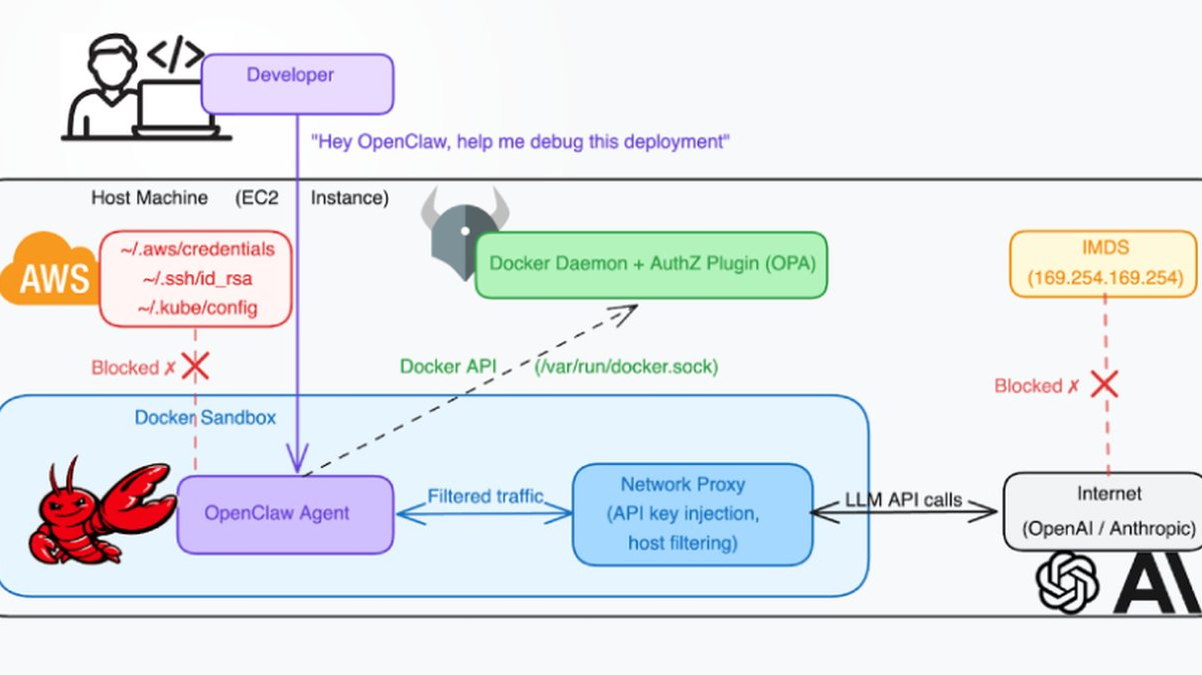

The vulnerability resided in the plugin's handling of data fetched from external URLs. An attacker could craft a malicious webpage containing hidden, adversarial instructions. When the Grafana AI plugin processed this page—for instance, to summarize its content—the AI would interpret the hidden commands as legitimate prompts. These commands could then coerce the AI to retrieve confidential information from the Grafana instance, such as metric data or data source configurations, and transmit it to the attacker's specified endpoint. This method bypasses traditional security perimeters by weaponizing the AI's inherent functionality and trust in the data it processes.

Security researchers emphasize that this flaw represents a paradigm shift in application security. Unlike direct prompt injection, where malicious input is fed directly into a chat interface, this indirect method embeds the attack within data the AI is programmed to trust and ingest autonomously. It underscores a fundamental challenge in securing AI-augmented applications: ensuring that the AI's ability to take actions, like fetching and sending data, is rigorously constrained and that all processed content is treated as potentially untrusted, regardless of its source.

The prompt patching by Grafana's team prevented widespread exploitation, but the disclosure serves as a critical warning to the industry. As organizations rapidly integrate generative AI and large language models (LLMs) into business applications, they must expand their threat models. Security protocols must now account for AI-specific risks, including prompt injection, training data poisoning, and the misuse of AI agents with access to sensitive systems. This event in a widely-used platform like Grafana is a clarion call for implementing robust AI security frameworks, conducting thorough audits of AI integrations, and maintaining a principle of least privilege for any AI-driven functionality.