EXCLUSIVE: THE ZERO-DAY IN YOUR AI IS WAITING TO BE EXPLOITED

A major cybersecurity standards body has just sounded a DEFCON-level alarm, issuing a stark warning that every company using generative AI is sitting on a ticking time bomb. In a critical update, experts have formally recognized 21 distinct, catastrophic risks—from data breach pipelines to novel malware delivery systems—inherent to the AI tools now embedded in global business.

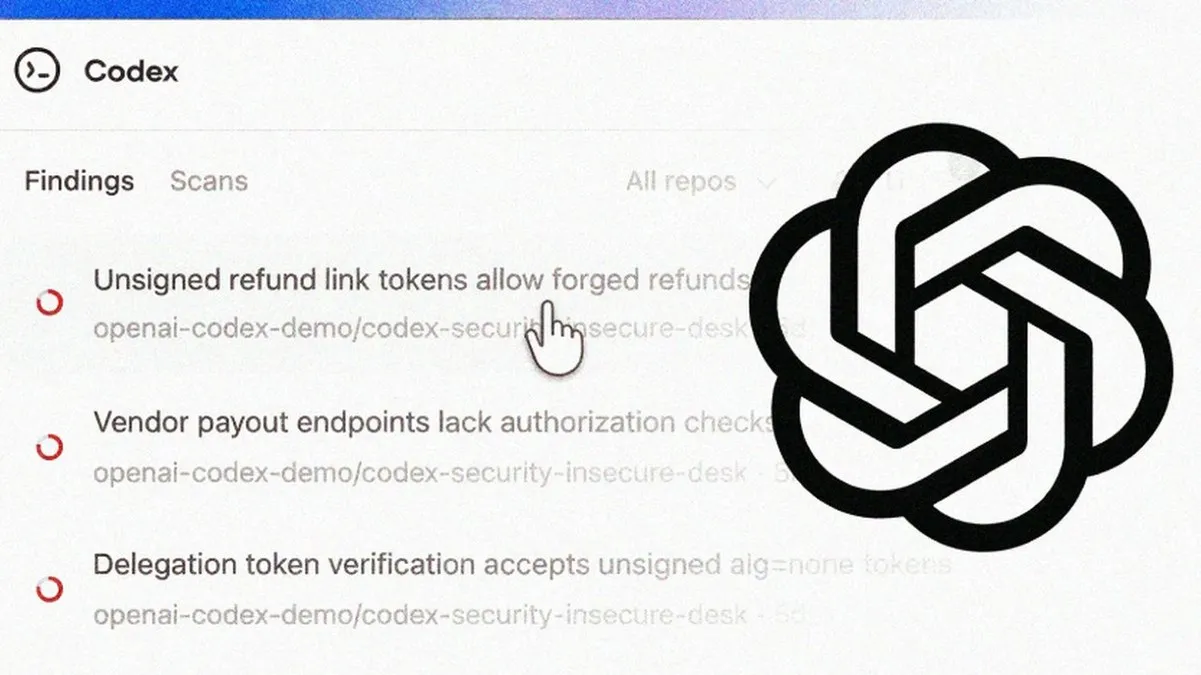

This isn't about simple phishing 2.0. We are talking about weaponized AI agents that can autonomously hunt for vulnerabilities, craft bespoke ransomware, and orchestrate breaches at machine speed. The new guidance draws a terrifying line: defending traditional IT infrastructure is NO LONGER ENOUGH. Your GenAI chatbots and "agentic" AI systems require their own, separate war rooms, linked to a central command.

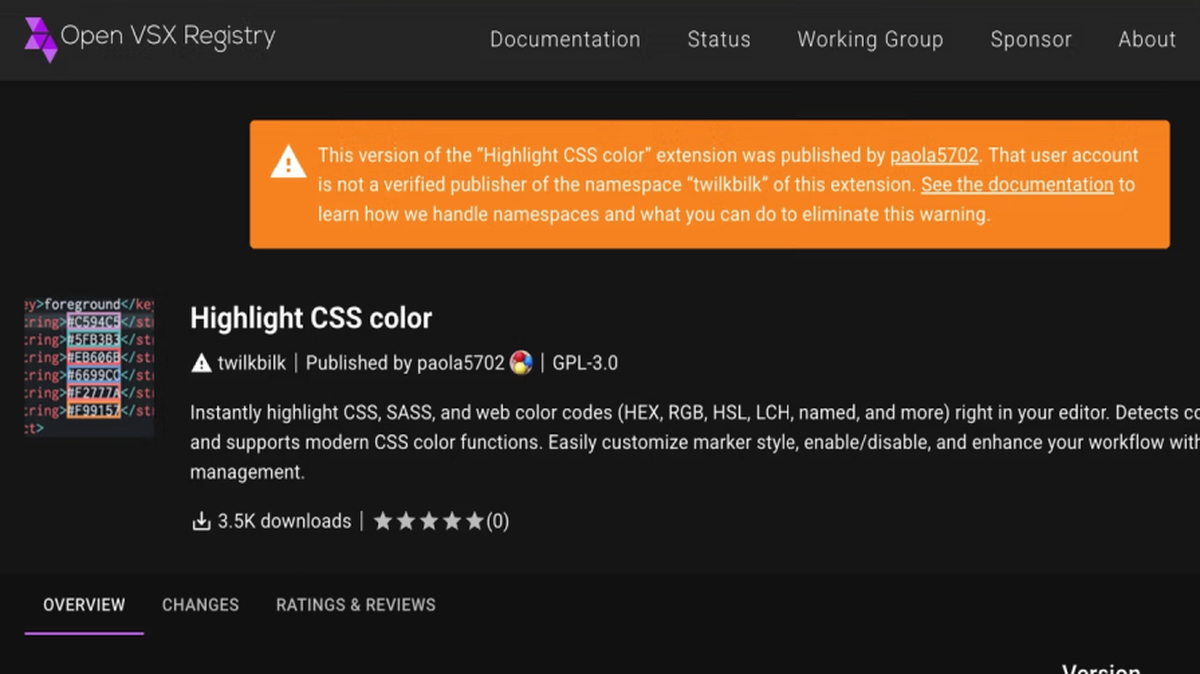

"An AI model can be poisoned during training, or a prompt can be engineered to force it to dump its entire dataset. This is the ultimate supply chain attack," revealed a senior cybersecurity architect working with the standards group. "We are finding attack vectors that didn't exist two years ago. The crypto and blockchain security communities are watching closely, as these systems manage increasingly valuable assets."

Why should you care? Because your customer data, your intellectual property, and your financial operations are now filtered through systems with unprecedented, poorly understood attack surfaces. A single successful exploit could lead to a leak with no conceivable patch.

We predict the first massive, enterprise-crippling AI ransomware attack will hit within the year, leveraging these documented weaknesses. The tools to build it are already in the wild.

The AI revolution has a fatal vulnerability, and the bad actors are already writing the exploit.