A North Carolina musician has pleaded guilty to orchestrating a sophisticated fraud scheme that siphoned over $10 million in royalty payments from major streaming platforms. Michael Smith, 54, admitted to using artificial intelligence (AI) to generate hundreds of thousands of songs and then deploying automated bots to artificially inflate their play counts into the billions on services including Spotify, Apple Music, Amazon Music, and YouTube Music. The scheme, which operated from 2017 to 2024, represents one of the most significant cases of digital streaming fraud uncovered to date, highlighting the evolving challenges platforms face in distinguishing legitimate engagement from automated fraud.

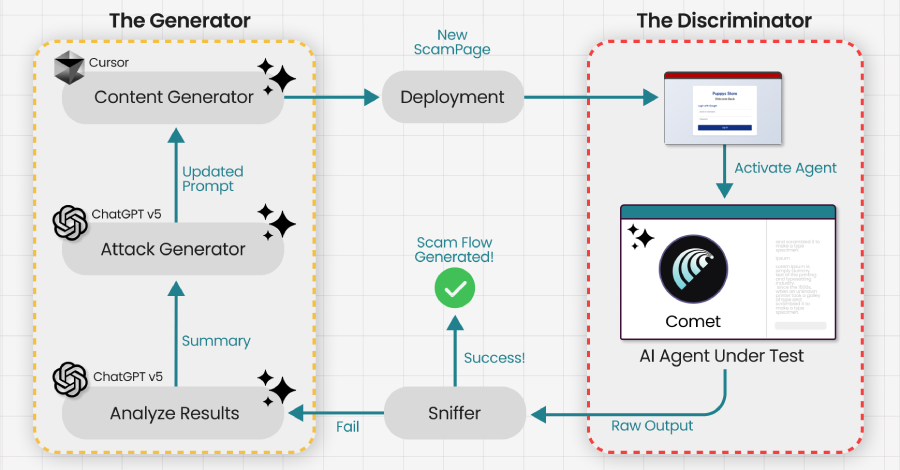

According to court documents unsealed in September 2024, Smith purchased the AI-generated music from an accomplice and collaborated with an unnamed music promoter and the CEO of an AI music company to execute the plan. A key tactic to evade detection involved using a network of over 1,000 bot accounts routed through virtual private networks (VPNs) to mask the fraudulent streaming activity. In internal communications, Smith explicitly discussed strategies to circumvent anti-fraud algorithms, noting in an October 2018 email the need for "a TON of content with small amounts of Streams" to avoid raising red flags with platform policies.

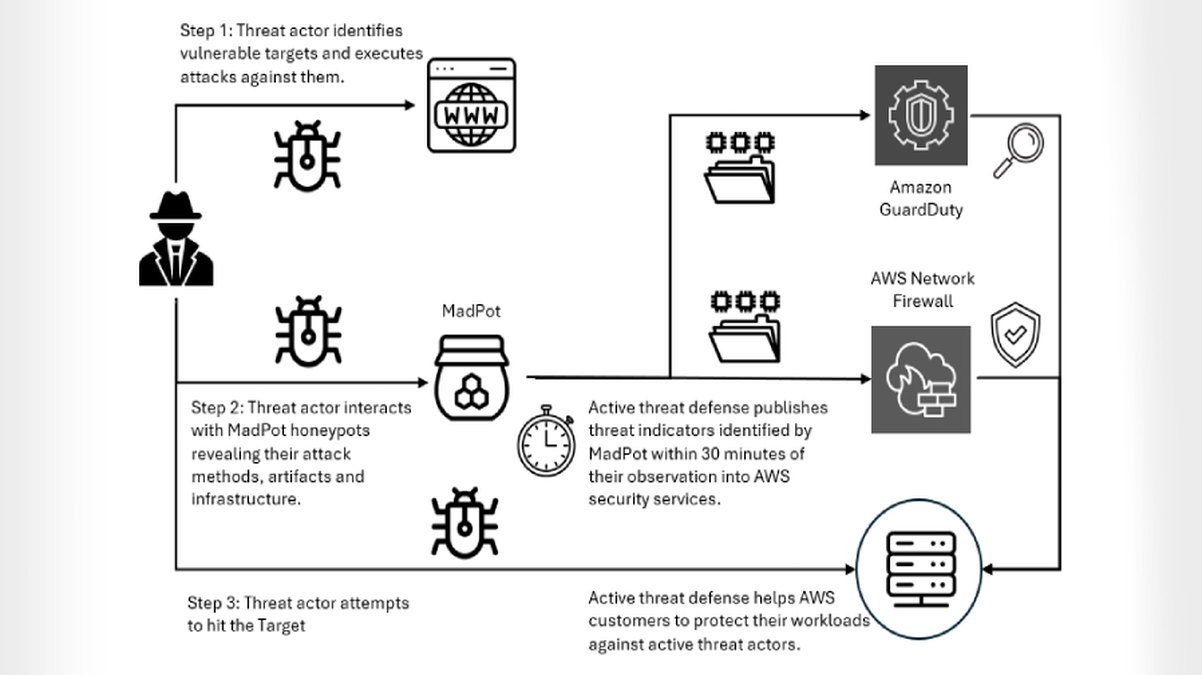

The case underscores a critical vulnerability in the streaming economy's royalty distribution model, which is largely based on play counts. By exploiting this with scalable AI content and botnets, fraudsters can generate substantial illicit revenue, ultimately diverting funds from legitimate artists. This incident has prompted renewed scrutiny from streaming services, which are now compelled to enhance their fraud detection systems, potentially integrating more advanced behavioral analytics and machine learning to identify patterns consistent with bot-driven streaming.

From a cybersecurity and digital forensics perspective, this fraud operation blends several threat vectors: the weaponization of generative AI for content creation, the use of botnets for credential stuffing and automated actions, and obfuscation techniques like VPNs. It serves as a stark reminder that cybercrime is expanding beyond traditional data theft and ransomware into new domains like digital media and intellectual property fraud. As AI tools become more accessible, such schemes may proliferate, requiring a multi-layered defense strategy from platforms that includes robust identity verification, continuous monitoring of streaming patterns, and legal cooperation to prosecute offenders.

The guilty plea is a result of an extensive investigation and marks a significant step in holding individuals accountable for digital fraud. It also signals to other potential fraudsters that law enforcement agencies are developing the expertise to trace complex, multi-year digital schemes. For the music industry and streaming platforms, this case is a call to action to fortify their ecosystems against such exploitation, ensuring that royalty systems reward genuine artistic creation rather than sophisticated technological deception.