A new class of cybersecurity threat has emerged targeting AI-powered "agentic" web browsers. These browsers, which leverage artificial intelligence to autonomously navigate and perform actions across websites on a user's behalf, have been shown to be vulnerable to sophisticated manipulation. Security researchers from Guardio Labs have demonstrated a novel attack that can trick Perplexity's Comet AI browser into falling for a phishing scam in under four minutes. The attack fundamentally exploits the AI's own operational transparency and reasoning processes to systematically lower its built-in security defenses.

The core vulnerability lies in a phenomenon researchers have dubbed "Agentic Blabbering." Unlike traditional browsers, AI agents operate by continuously analyzing web pages, making decisions, and narrating their internal thought process—what they see, what they believe is happening, and what they plan to do next. This verbose operational data, transmitted between the browser client and the vendor's AI servers, creates a rich attack surface. By intercepting this traffic, attackers can gain real-time insight into the AI's suspicions, hesitations, and trust signals. As security researcher Shaked Chen of Guardio explained, this blabbering provides a perfect "training signal" for an adversarial AI to craft a dynamic, evolving scam page that specifically adapts to bypass the target AI's guardrails.

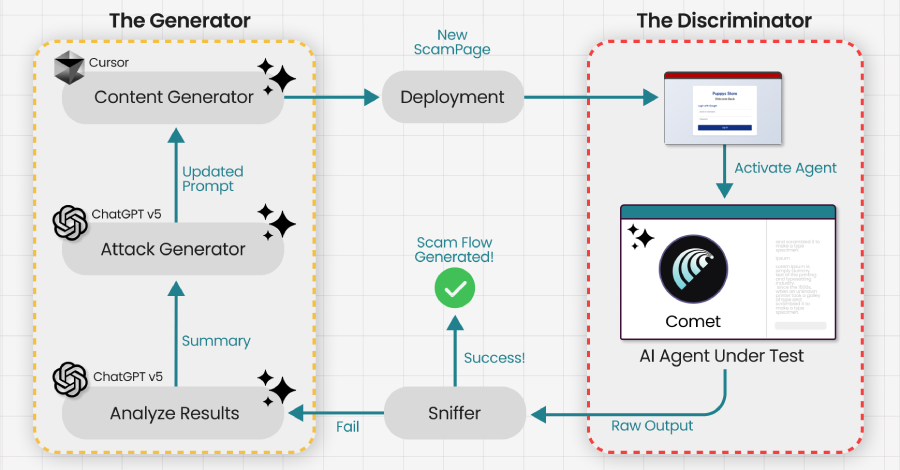

The demonstrated attack employs a Generative Adversarial Network (GAN) framework. One AI (the adversary) analyzes the intercepted "blabber" from the target AI browser to understand its current state and security posture. It then uses this feedback to dynamically modify a malicious webpage in real-time, iteratively testing different elements and content until it successfully convinces the target AI that the page is safe and legitimate. This process continues in a feedback loop, with the scam evolving based on the AI's own commentary, until the browser is reliably tricked into performing a compromised action, such as submitting credentials to a phishing site. This represents a paradigm shift in the threat model; the scam no longer needs to deceive a human user, but rather the autonomous AI agent handling the task.

This research builds upon prior techniques like "VibeScamming" and "Scamlexity," which revealed that AI coding assistants and browsers could be manipulated via hidden prompt injections to generate scam content. The new attack automates and accelerates this manipulation by directly leveraging the AI's operational chatter as a guide. The implications are significant for the rapidly developing field of agentic AI. It highlights a critical security paradox: the very reasoning and transparency features that make AI agents auditable and controllable also provide a blueprint for attackers to exploit them. As Chen notes, the scam evolves until "the AI Browser reliably walks into the trap another AI set for it." This underscores an urgent need for developers to redesign the architecture of AI agents, moving away from verbose external communication of internal states and towards more secure, opaque decision-making processes that do not leak exploitable signals to potential adversaries.