Security teams have invested heavily in identity and access management frameworks for human users and service accounts. However, a new and largely ungoverned category of actor has emerged within enterprise environments: the AI coding agent. Anthropic's Claude Code is now operating at scale across engineering organizations, autonomously reading files, executing shell commands, calling external APIs, and connecting to third-party integrations known as MCP servers. Crucially, it performs these actions with the full permissions of the developer who initiated it, operating directly on the local machine before any traditional network-layer security tool can observe its activity. This creates a significant blind spot, as Claude Code leaves no audit trail that conventional security infrastructure was designed to capture.

This visibility gap stems from the architectural limitations of existing security tooling. Most enterprise security solutions—such as SIEMs, network monitors, and API gateways—are positioned at the network perimeter. They only analyze traffic after it has left the endpoint. By the time an alert is generated, Claude Code has already completed its actions: sensitive files may have been read, shell commands executed, and data potentially exfiltrated. The agent's operational profile exacerbates this challenge. It "lives off the land," utilizing the developer's existing tools and permissions rather than deploying its own identifiable binaries. Its communications are embedded within standard external model calls that resemble normal traffic, and it executes complex, unprompted action sequences that no human explicitly programmed. Operating with inherited credentials, it can access production systems, sensitive data, and other critical assets residing on the developer's machine.

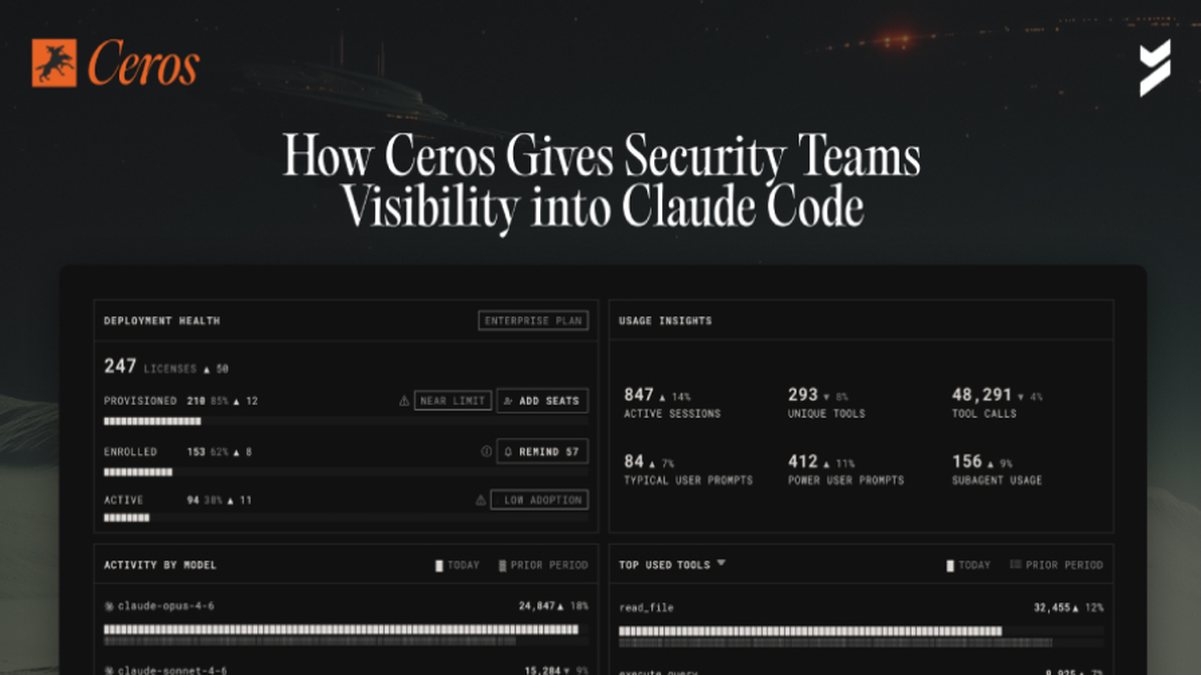

To address this emergent threat vector, Beyond Identity has developed Ceros, an AI Trust Layer designed to provide security teams with the necessary visibility and control. Ceros is deployed directly on the developer's workstation alongside Claude Code. It functions by establishing real-time monitoring, enforcing runtime security policies, and generating a cryptographic audit trail for every action the AI agent performs. This approach shifts security left to the point of execution, enabling governance before actions propagate to the network layer. The solution aims to bring AI agents under the same security paradigm as human and service identities.

The implementation of Ceros represents a critical step towards secure AI integration in the software development lifecycle. By providing a mechanism for policy enforcement—such as blocking unauthorized file access or restricting specific shell commands—security teams can mitigate risks without impeding developer productivity. The cryptographic audit trail ensures accountability and supports forensic investigations, creating a record of AI agent behavior that was previously nonexistent. As AI coding assistants become ubiquitous, tools like Ceros will be essential for maintaining security postures, ensuring that the autonomy of AI agents does not come at the cost of organizational control and data protection.