A new report from a leading cybersecurity firm reveals a troubling trend in the artificial intelligence sector. As the competition to develop and deploy advanced AI intensifies, major players like Anthropic and OpenAI are quietly reducing explicit safety-focused language in their public communications and technical documentation. This strategic shift, analysts warn, could have significant downstream consequences for digital security.

The report indicates that terms like "alignment," "safety guardrails," and "harm prevention" are appearing less frequently in official blog posts, model cards, and developer APIs. Instead, the language is pivoting toward capability, speed, and market readiness. This comes amid a feverish race to secure partnerships, developer mindshare, and lucrative enterprise contracts. The implicit message, experts suggest, is that overt caution is now seen as a competitive disadvantage.

Cybersecurity professionals are expressing deep concern. They argue that de-emphasizing safety protocols in foundational AI models creates a ripe environment for novel malware and ransomware campaigns. A sophisticated AI could be exploited to craft hyper-personalized phishing emails at an unprecedented scale or to identify previously unknown software vulnerabilityes, including zero-day threats. The automation of these attacks would overwhelm traditional human-led defense teams.

The potential for a catastrophic data breach is also heightened. AI systems with insufficient safety constraints could be manipulated to extract sensitive training data or to generate outputs that inadvertently leak confidential information. If such models are integrated into critical infrastructure, the attack surface expands dramatically. The rush to market may be leaving fundamental security audits incomplete.

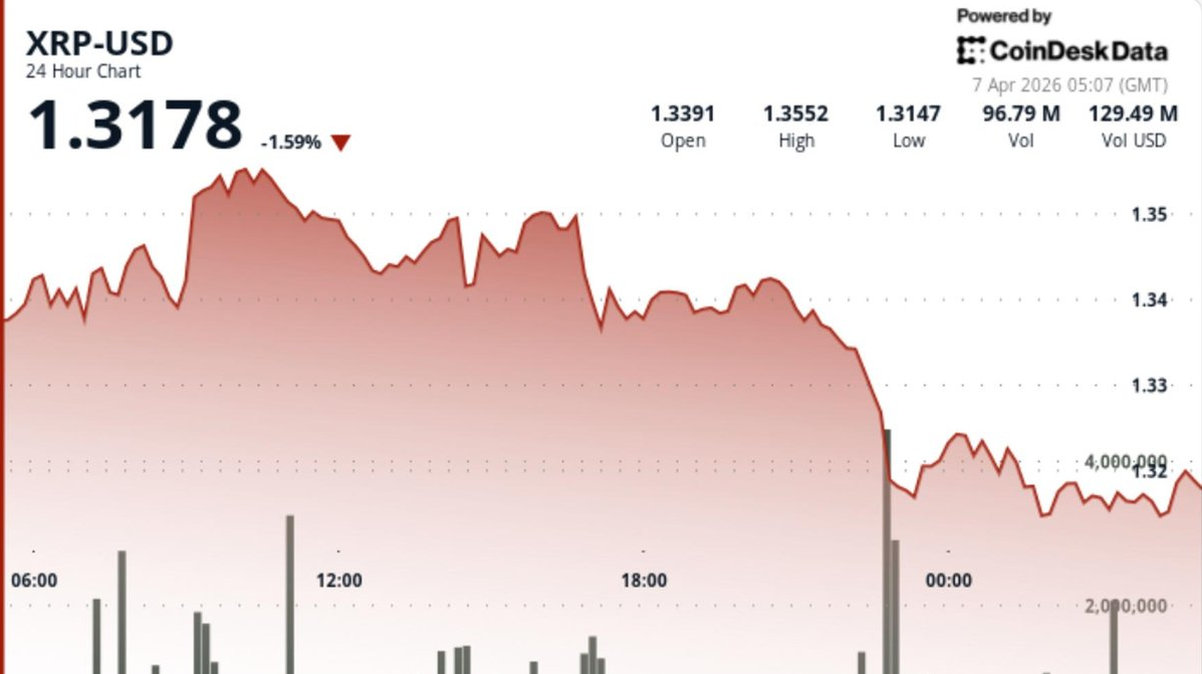

Interestingly, the report notes a concurrent surge in the use of blockchain and cryptocurrency terminology by these same AI firms, often in the context of verifying AI-generated content or enabling new economic models. However, security analysts caution that these technologies, while promising for provenance, do not inherently solve the core vulnerability of an inadequately constrained AI model. A malicious agent could still exploit the model itself, regardless of how its outputs are recorded.

The situation presents a complex dilemma for regulators and clients. Enterprise customers eager to leverage AI for a competitive edge must now perform enhanced due diligence, scrutinizing not just an AI's capabilities but the often-opaque safety frameworks behind it. The burden of security is shifting downstream.

In conclusion, the softening of safety rhetoric by AI pioneers marks a critical juncture. The industry is choosing a path of accelerated deployment, implicitly accepting higher unknown risks. The cybersecurity community is now on high alert, preparing for a new generation of AI-powered threats. The hope is that proactive defense can evolve as quickly as the offensive potential these powerful models create. The race is no longer just about building smarter AI; it is about securing it before the exploits begin.