A critical security vulnerability has been identified within the Claude AI coding environment, allowing attackers to execute remote code and steal sensitive API keys. This zero-day exploit, discovered by cybersecurity researchers at Sentinel Labs, highlights a significant flaw in how the platform processes certain types of code snippets. The vulnerability, if left unpatched, could lead to widespread data breaches and ransomware attacks targeting developers and their organizations.

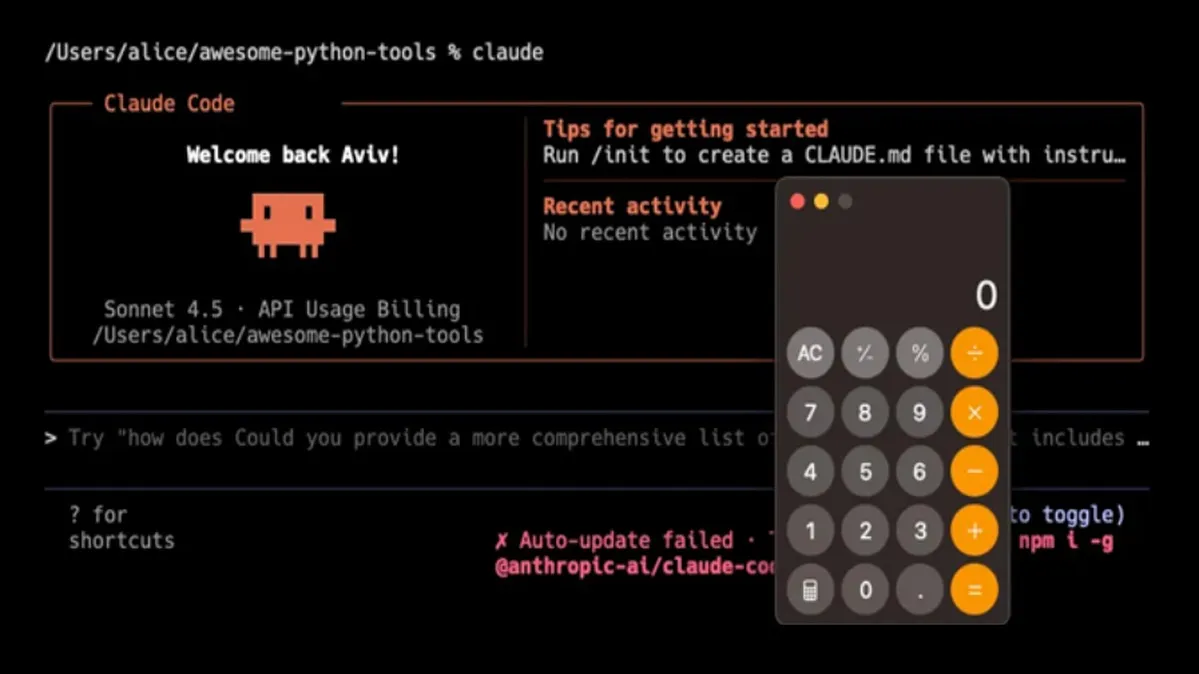

The exploit chain begins with a sophisticated phishing campaign, where attackers lure developers to malicious repositories containing seemingly benign code examples. Once a developer interacts with the code within the Claude environment, a hidden payload is triggered. This payload exploits a memory corruption vulnerability to break out of the intended sandbox, granting the attacker remote code execution capabilities on the underlying host system.

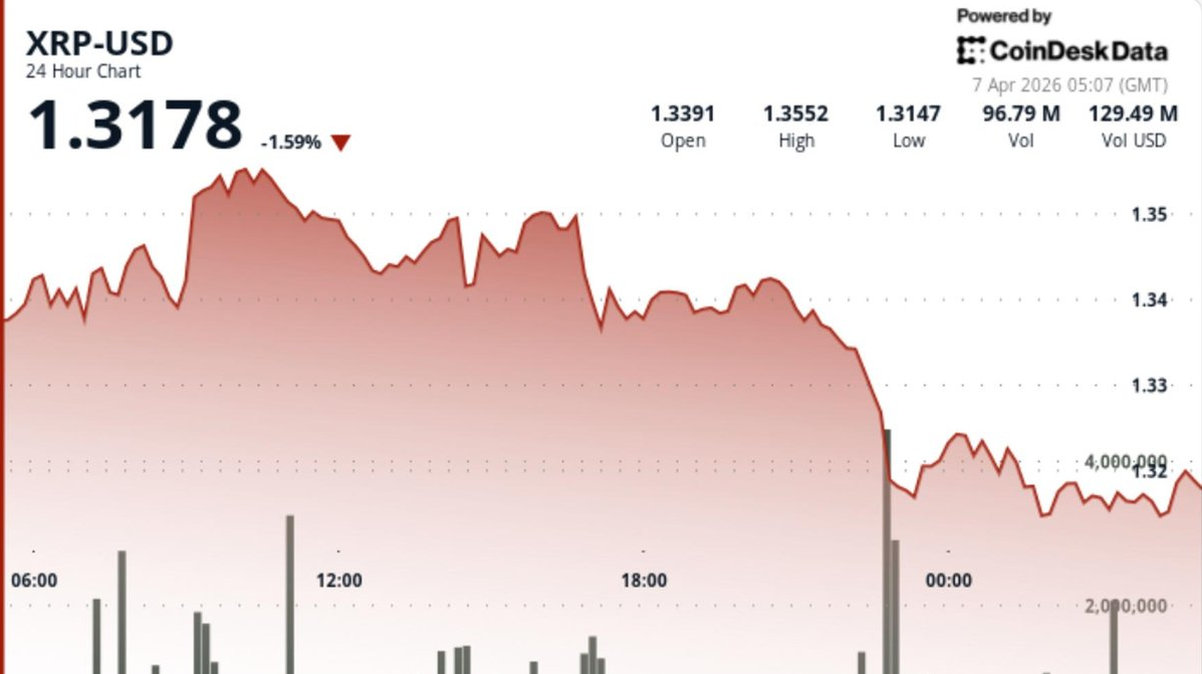

Upon gaining a foothold, the malware immediately begins scanning for and exfiltrating API keys, cryptocurrency wallet credentials, and blockchain node access tokens stored on the compromised machine. Researchers confirmed that the attack framework is designed to be modular, allowing threat actors to deploy additional payloads, including ransomware that encrypts critical project files and demands payment in crypto.

"This is a classic supply chain attack targeting the tools developers trust daily," explained Dr. Anya Sharma, lead researcher at Sentinel Labs. "The attackers are not just after individual credentials; they are positioning themselves to compromise entire software pipelines and inject further vulnerabilities into downstream applications." The sophistication suggests the involvement of a well-resourced, possibly state-sponsored, hacking group.

The discovery underscores the escalating threats in the software development lifecycle. As organizations increasingly rely on AI-assisted coding, these platforms become high-value targets. The incident serves as a stark reminder that no tool is inherently secure and that the principle of least privilege must extend to development environments. Security teams are urged to scrutinize AI-generated code before execution.

In response to the disclosure, Anthropic, the creator of Claude, has released an emergency security patch. The update addresses the specific memory handling flaw and implements stricter sandboxing protocols for code execution. The company also announced a bug bounty program enhancement to encourage more external scrutiny of its systems.

For developers and organizations, immediate action is required. Security experts recommend rotating all API keys and cryptographic certificates that may have been exposed to the Claude environment in recent weeks. Furthermore, implementing network segmentation to isolate development environments and employing robust endpoint detection and response (EDR) solutions are now considered essential best practices in the age of AI-powered development.