A new wave of sophisticated cyberattacks is targeting the rapidly expanding infrastructure behind large language models (LLMs), exposing critical vulnerabilities where artificial intelligence meets enterprise IT. Security researchers warn that the rush to deploy and integrate these powerful AI systems has created a dangerous expansion of the attack surface, with poorly secured application programming interfaces (APIs) and management consoles acting as open doors for threat actors.

The core of the problem lies in exposed endpoints. Every LLM requires numerous connection points—APIs for integration, web interfaces for administration, and data pipelines for training. When these endpoints are not rigorously hardened, they become prime targets. Attackers are actively scanning for these weaknesses, using automated tools to find unprotected instances that can be compromised to gain a foothold within an organization's AI and broader network infrastructure.

Once inside, the threats are multifaceted. Malware and ransomware campaigns can be deployed to cripple AI operations or hold valuable training data hostage. More insidiously, attackers can stage a direct data breach, exfiltrating the massive, proprietary datasets used to train models. This stolen intellectual property is incredibly valuable on illicit markets. Furthermore, a compromised LLM system can be manipulated to generate malicious code, highly convincing phishing emails, or to leak sensitive information through its own responses.

A particularly alarming trend is the emergence of AI-specific zero-day exploits. These are previously unknown vulnerabilities in the AI software stack itself, such as in inference servers or GPU management layers. Because they are unknown to vendors, there are no patches available, making defenses nearly impossible until the flaw is discovered and fixed. Hackers are investing significant resources to find and weaponize these vulnerabilities for high-value attacks.

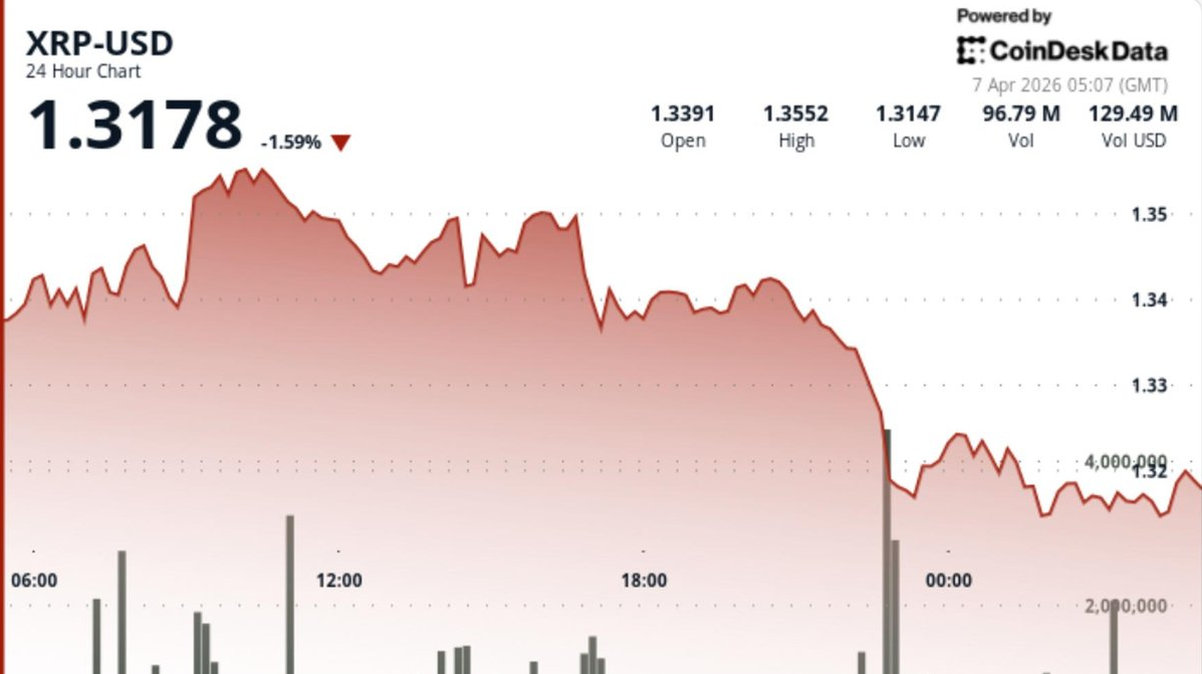

The financial motive is increasingly clear with the rise of crypto-centric attacks. Threat groups are tailoring ransomware to demand payments exclusively in cryptocurrency, leveraging the anonymity of blockchain transactions. There are also documented cases of attackers using compromised cloud compute resources, accessed via LLM infrastructure, to illegally mine for cryptocurrency, running up massive bills for the victim organization while generating profit for the hackers.

To combat these risks, experts advocate for a zero-trust approach applied specifically to AI deployments. This includes strict access controls, continuous monitoring of all API traffic, and comprehensive logging. All data in transit and at rest must be encrypted. Perhaps most importantly, organizations must assume that vulnerabilities exist and segment their LLM infrastructure from core business networks to limit the potential blast radius of any breach.

The integration of LLMs offers tremendous business potential, but it also introduces a new frontier for cybersecurity threats. Proactive hardening of every endpoint, vigilant threat hunting, and a culture of security-by-design are no longer optional. As AI becomes more embedded in critical operations, protecting its underlying infrastructure from malware, ransomware, and data breach campaigns is paramount to safeguarding both innovation and enterprise security.